Technology has always changed how medicine is practiced. The stethoscope, the portable ultrasound the electronic medical record. Each one arrived with promise and skepticism in equal measure. Artificial intelligence (AI) is no different, except that this time, the stakes are higher and the transformation faster.

We are now approaching, and perhaps have already entered, a new chapter in which AI can genuinely facilitate clinical excellence rather than stand in the way of it. But reaching that future requires something technology alone cannot provide: trust.

New AI in healthcare research commissioned by EBSCO Clinical Decisions reveals the precise shape of that trust challenge, and it’s more nuanced than most headlines suggest. The findings, drawn from a survey of 1,000 U.S. clinicians and a separate survey of 1,000 U.S. consumers, explore how AI is actually being used in care delivery, and how both clinicians and patients feel about it.

The results confirm what many of us sense already: clinical AI is here, it’s saving us time, but there is a growing trust gap between the people who use it and the people we use it for.

Every Generation of Clinicians Has Had to Adapt. This One Is No Different.

Not long ago, the electronic health record (EHR) was the healthcare disruptor. Before it, the idea that a computer screen would sit between a clinician and a patient felt like a threat to everything the exam room was supposed to be. And in some ways, it was. At first. The transition was clumsy. The technology demanded attention that belonged to the patient.

But clinicians adapted. They learned to use the tool without becoming subordinate to it. And the practice of medicine was better for it.

AI is the next chapter of that story. The clinicians who will define the standard of care in the years ahead are not the ones who resist it, but the ones who learn to use it well. With discernment, with transparency, and with the patient relationship as the fixed point around which everything else turns.

The question isn't whether AI belongs in the exam room. The data makes clear it does. The question is what kind of AI, and how it should be used.

The Case for Clinical Decision Support AI: The Evidence Gap Is Real, and It's Costing Clinicians Time They Don't Have

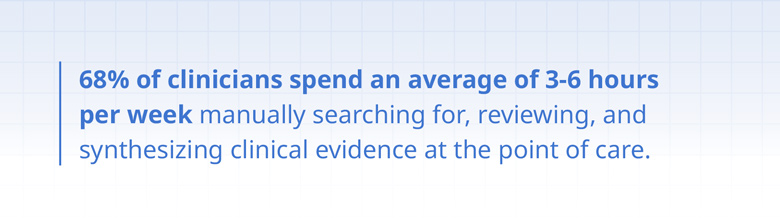

Medicine has always required clinicians to stay current. But the volume of published evidence has grown faster than any individual can meaningfully track. Clinical guidelines are updated regularly. Trial results overturn long-held assumptions. New drug interactions are discovered. Staying on top of research has become one of the most quietly exhausting parts of the job.

Our survey found 68% of clinicians spend three to six hours per week manually searching for and synthesizing clinical evidence at the point of care. That work primarily happens between patient visits or after hours. Time taken from patient care, from family, from rest. It’s not sustainable, and it doesn't have to be.

AI-CDS: Built for the Complexity of Clinical Practice

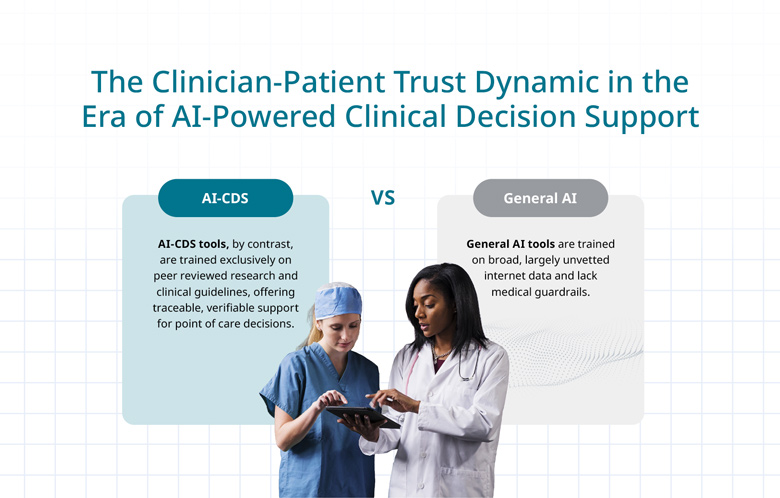

This is precisely what evidence-based, clinical decision support AI is designed to address. Unlike general-purpose AI tools built on broad, unvetted internet data, AI-CDS tools are trained exclusively on peer-reviewed research and established clinical guidelines. They surface relevant, verifiable guidance within the clinical workflow, at the moment of decision, with every recommendation traceable to its source.

The results speak for themselves. Three-quarters (75%) of clinicians report saving four or more minutes per patient encounter with AI-CDS. Nearly a quarter (23%) save 10 minutes or more. And 87% say it reduces cognitive load, freeing mental energy from information retrieval and returning it to where it belongs: clinical reasoning, patient context, and the judgment that no algorithm can replace.

How is Artificial Intelligence Being Used in Healthcare Clinical AI: What the Data Tells Us

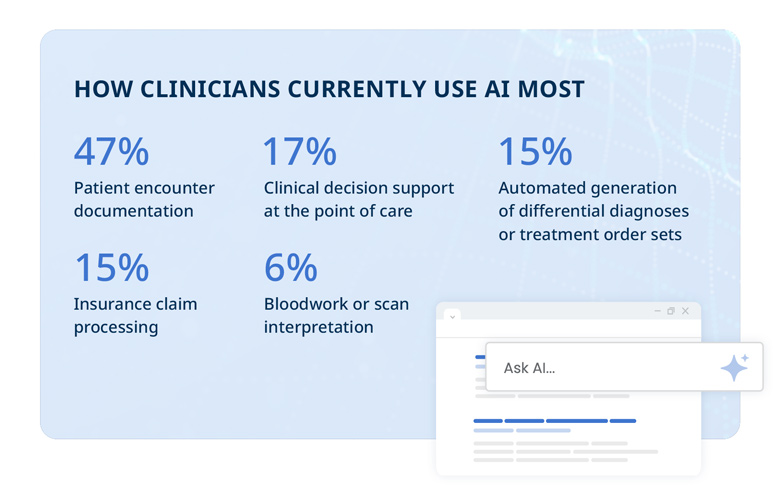

AI adoption in clinical settings follows a familiar arc. It begins where the burden is highest and the risk feels lowest. Today, documentation is the primary use case, with 47% of clinicians reporting they use AI to capture patient encounter notes — a use case 75% of surveyed consumers are comfortable with, per our research.

But the story doesn't stop there. Clinicians currently use AI in healthcare primarily for clinical decision support at the point of care (17%), differential diagnosis automation (15%), and lab and imaging interpretation (6%).

This new research makes it clear that AI is moving steadily from the administrative layer toward the clinical one, and the clinicians leading the way are showing the rest of the profession what responsible adoption looks like.

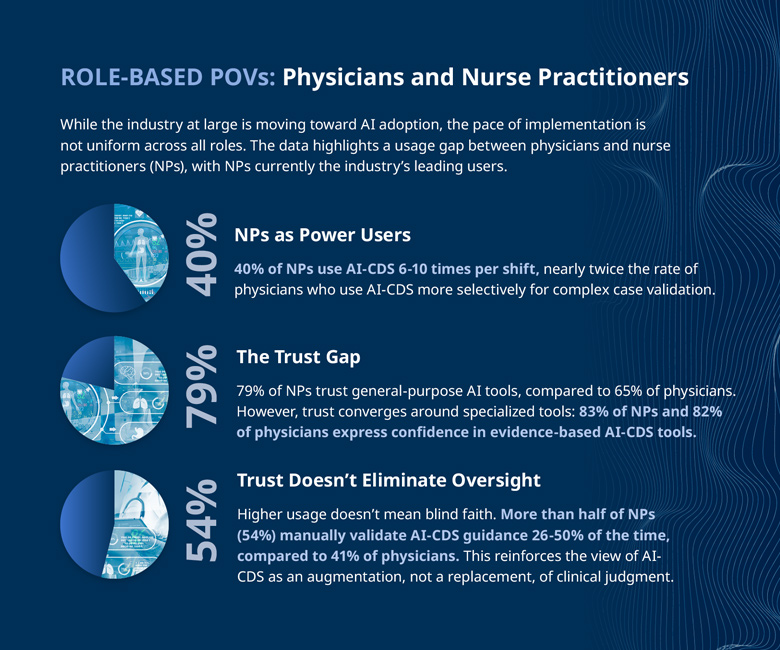

Nurse Practitioners Are Paving the Way as Early Adopters

Among the most instructive findings is the role of nurse practitioners (NP) as early adopters. In fact, 40% of NPs use AI-CDS six to 10 times per shift. And their approach is worth noting, as more than half manually verify AI-CDS guidance 26-50% of the time.

High usage paired with active oversight is not a contradiction. It is the model. AI-CDS earns its place in clinical practice by informing decisions, not by making them. The clinician's judgment remains the final word.

AI in Healthcare Research Shows Trust as the Differentiator

Here is where the research for AI in healthcare becomes most instructive for organizations weighing their AI strategy. Trust in healthcare AI does not exist on a single spectrum. It bifurcates clearly based on the type of tool.

Our survey found 80% of clinicians trust clinical guidance from healthcare-specific, evidence-based AI-CDS tools. Among consumers, the pattern is even sharper: 66% say their confidence in a recommendation would increase if a specialized AI tool were used, while 54% say it would decrease if a general-purpose AI were involved instead.

This is not a subtle preference, but rather a clear mandate. The architecture of trust is either built into the design of the tool or it is absent entirely. Healthcare organizations that treat all AI as interchangeable do so at real risk to patient confidence and clinical credibility.

Transparency Isn't Optional, It's the Foundation

When it comes to transparency in AI-powered clinical decision support, clinicians require a glass-box approach: every AI output must be traceable to its source evidence. The features they cite as most essential include direct links to source literature (24%), strong data privacy protections (23%), human-specialist review and vetting (22%), and clear transparency into how conclusions are reached (21%).

For patients, disclosure is a matter of ethics, not preference. Our data found that 56% indicate it would be a violation of trust if their provider used general AI without informing them. Transparency, proactively offered, is what separates AI that builds the care relationship from AI that quietly strains it.

The Real Payoff: More Time for the Heart of Medicine

When AI-CDS absorbs the work of evidence synthesis — the manual searching, the cross-referencing, the after-hours research — it doesn't just return time to the clinician. It returns presence. And presence, in medicine, is everything.

The data is clear: 89% of clinicians believe AI-CDS will lead to better patient outcomes and higher quality care. Similarly, 67% of consumers agree that time saved by AI will make their providers more engaged and better communicators. When clinicians were asked what they would do with their reclaimed time, the top answer was spending more meaningful time with existing patients (23%).

That is the signal worth following. Designed with transparency, built on evidence, and deployed with the patient relationship as the measure of success, AI-CDS is not a threat to the practice of medicine. It is one of the most promising tools we have had in a generation to practice it better.

The Full Picture is in the Report

This article offers a window into the findings. The complete view — including how healthcare organizations can close the clinician-patient trust gap, the ethical framework for AI disclosure, role-by-role adoption breakdowns, and the operational steps that define responsible AI-CDS deployment — is available in the full report.